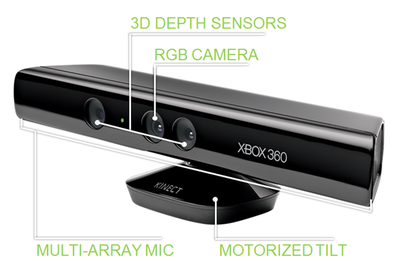

An example of motion control with structured light is the Microsoft's Xbox 360 (the picture to the left is from this website). The technology (United States Patent) is developed by an Israel 3D sensing company (PrimeSense) which was bought by Apple Inc. for $350 million on November 24, 2013 (Wiki).

The details about this technology are not publicly available, the description below is

based on my understandings and may be wrong.

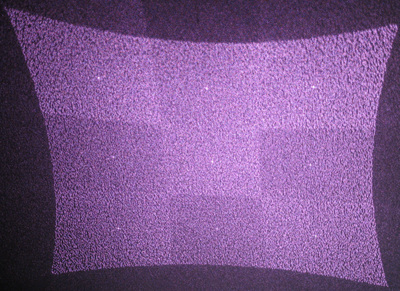

The key step is to generate a structured light pattern (image to the right) in a 3D space where the player will stand. There is one key optical element inside the device which consists of two elegant 2D diffractive gratings placed in sequence. The grating is illuminated with an infrared light source. The light pattern to the right of the above image is obtained by imaging the light pattern from the Kinect on a wall. It consists of a 3 by 3 light patterns with random spots inside each pattern.

The first grating was designed to generates an array of random light spot. This can be achieved by carefully designing the inner structures in each period of the grating. As we know, the size of the period in a grating determines the maximum diffraction orders the grating can generate (the number of random spots) and the diffraction angles. The inner structures of each period determines the relative intensity distributions among these diffraction orders. It is possible to calculate the inner structures with well-known global optimization method such as Simulated annealing algorithm or genetic algorithm to generated large diffraction spots with equal intensities. The concept described here can be well understood if you are familiar with Binary Optics.

The first grating only generates one random arrays. The second grating is used to spread and duplicate the random arrays into a 3 by 3 array. The beauty of the design is that the period of the two grating is carefully chosen so that the edge of each array is right adjacent with each other without gap and overlap. The 3 by 3 array corresponds to the three diffraction orders (-1, 0, +1) of the second 2D grating along the horizon and vertical direction. The inner structure of the second grating also need to be carefully designed to get equal intensity among the diffraction orders and maximum diffraction efficiency from the incoming beam.

Imagine there is one object (such as our hands) in the above pattern, its lateral position in this plane can be determined by measuring the distance between the object and three light points in the pattern. This only works with random spots. The relative position along the propagating direction is calibrated beforehand. Because the whole pattern diverges after propagating from the device. The light spot pattern imaged at different plane along the propagating path is similar but with different scaling factors. This means the distance between similar spots varies at different planes along the propagating direction and can be calibrated to determine the longitudinal positions of a object in the 3D light pattern.

We have tried to develop a new technology to generate structured light for motion control and have tried with random fiber bundles and Laser Speckle. However, the problems is that it is not well repeatable for massive productions.

The details about this technology are not publicly available, the description below is

based on my understandings and may be wrong.

The key step is to generate a structured light pattern (image to the right) in a 3D space where the player will stand. There is one key optical element inside the device which consists of two elegant 2D diffractive gratings placed in sequence. The grating is illuminated with an infrared light source. The light pattern to the right of the above image is obtained by imaging the light pattern from the Kinect on a wall. It consists of a 3 by 3 light patterns with random spots inside each pattern.

The first grating was designed to generates an array of random light spot. This can be achieved by carefully designing the inner structures in each period of the grating. As we know, the size of the period in a grating determines the maximum diffraction orders the grating can generate (the number of random spots) and the diffraction angles. The inner structures of each period determines the relative intensity distributions among these diffraction orders. It is possible to calculate the inner structures with well-known global optimization method such as Simulated annealing algorithm or genetic algorithm to generated large diffraction spots with equal intensities. The concept described here can be well understood if you are familiar with Binary Optics.

The first grating only generates one random arrays. The second grating is used to spread and duplicate the random arrays into a 3 by 3 array. The beauty of the design is that the period of the two grating is carefully chosen so that the edge of each array is right adjacent with each other without gap and overlap. The 3 by 3 array corresponds to the three diffraction orders (-1, 0, +1) of the second 2D grating along the horizon and vertical direction. The inner structure of the second grating also need to be carefully designed to get equal intensity among the diffraction orders and maximum diffraction efficiency from the incoming beam.

Imagine there is one object (such as our hands) in the above pattern, its lateral position in this plane can be determined by measuring the distance between the object and three light points in the pattern. This only works with random spots. The relative position along the propagating direction is calibrated beforehand. Because the whole pattern diverges after propagating from the device. The light spot pattern imaged at different plane along the propagating path is similar but with different scaling factors. This means the distance between similar spots varies at different planes along the propagating direction and can be calibrated to determine the longitudinal positions of a object in the 3D light pattern.

We have tried to develop a new technology to generate structured light for motion control and have tried with random fiber bundles and Laser Speckle. However, the problems is that it is not well repeatable for massive productions.

RSS Feed

RSS Feed